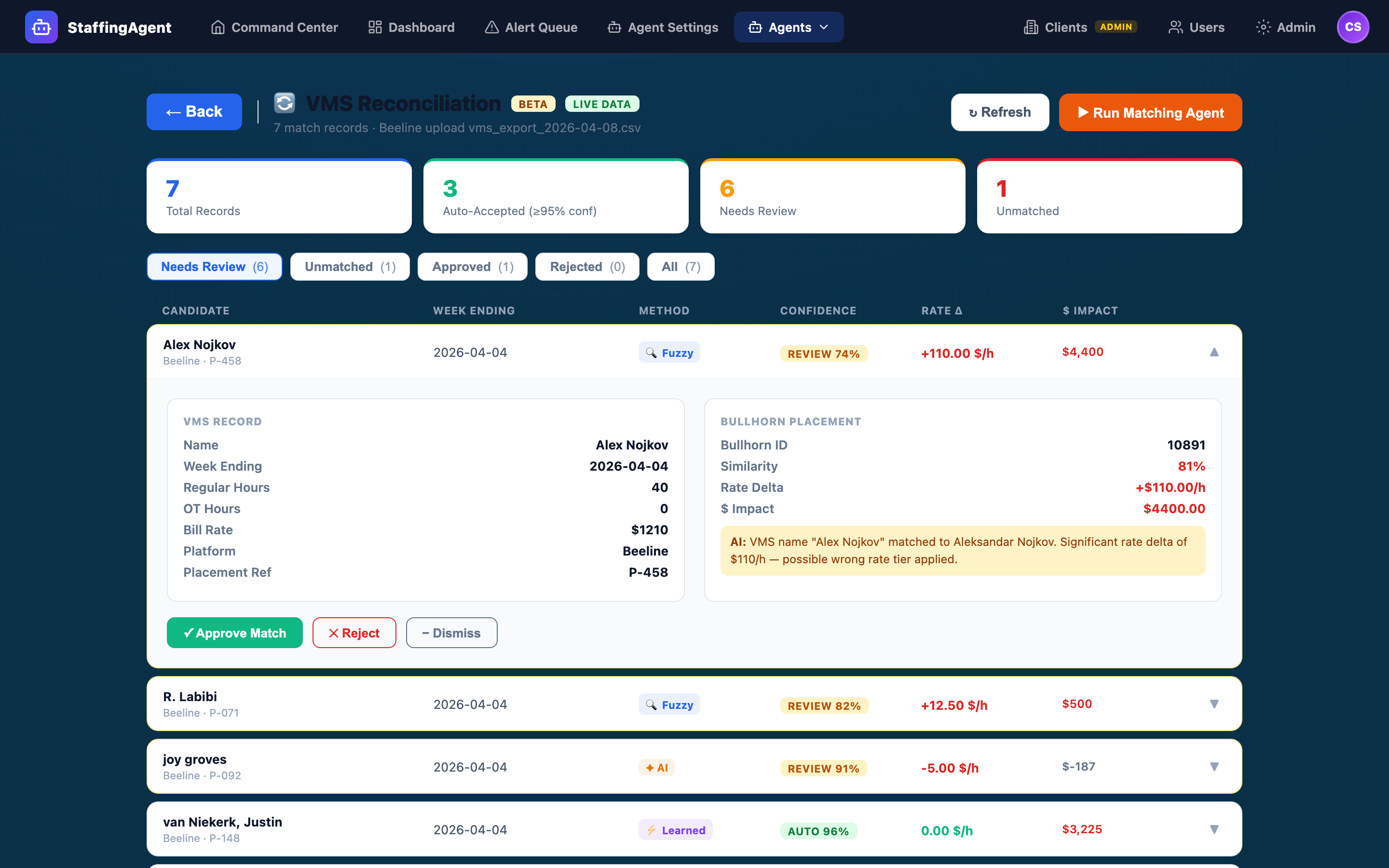

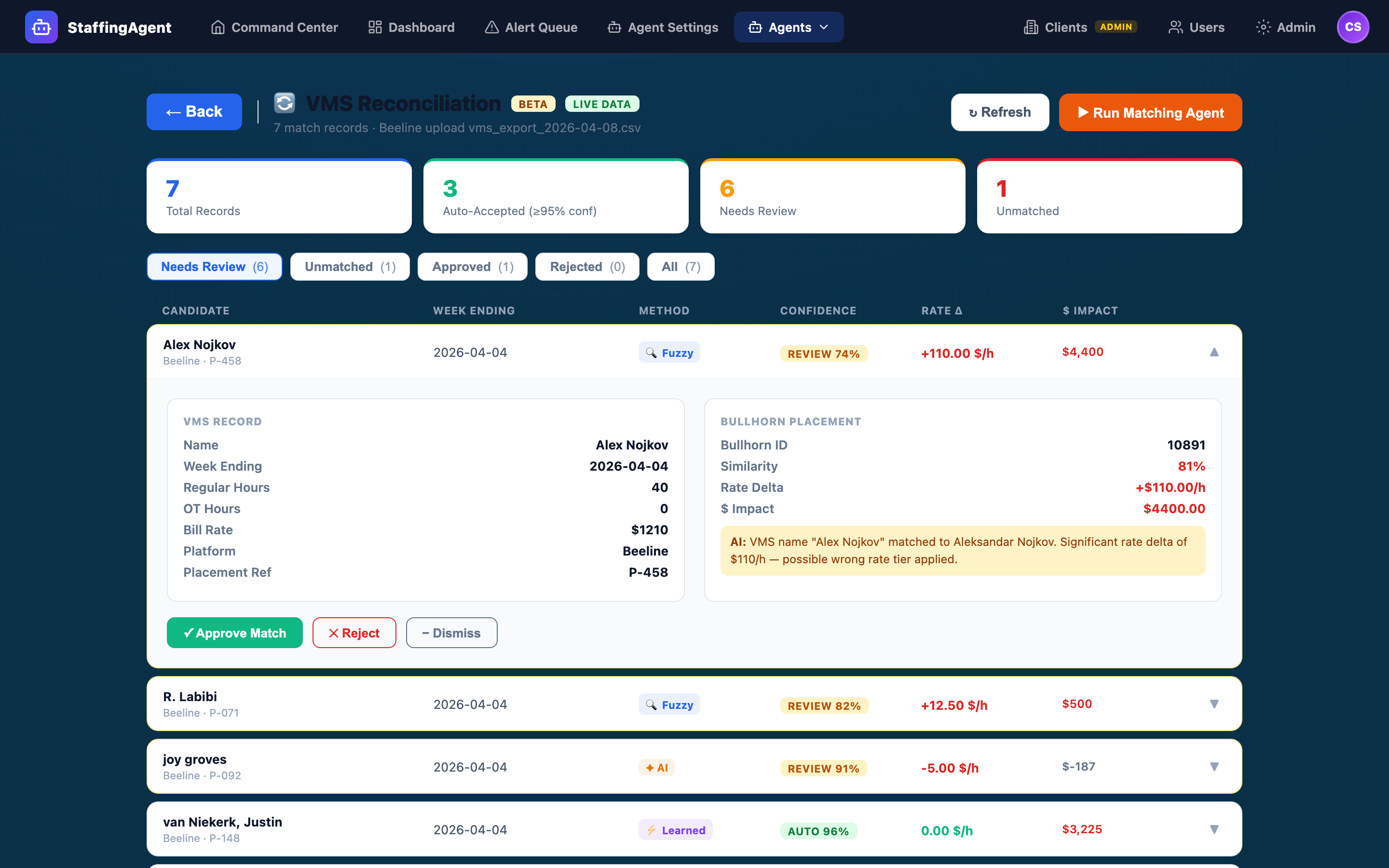

VMS Reconciliation Agent

Four match strategies run in order — Learned, Exact, Fuzzy, AI — and every result is routed by confidence tier. ≥95% auto-approves. 70–94% goes to human review. <70% is flagged. Side-by-side VMS↔ATS compare for every match.

Four match strategies run in order — Learned, Exact, Fuzzy, AI — and every result is routed by confidence tier. ≥95% auto-approves. 70–94% goes to human review. <70% is flagged. Side-by-side VMS↔ATS compare for every match.

Fieldglass, Beeline, VectorVMS, and Magnit all hold the same placements as your ATS — just under different IDs, with different field names, and occasionally different rates or hours. Reconciling by hand is slow, error-prone, and institutional (one senior analyst typically owns it). Whatever slips through becomes an underbilled or overbilled invoice, or an aging dispute the client won’t pay.

For every VMS record the agent receives, it runs four matching strategies in sequence. The highest-confidence result wins and is attached to the record as its “match candidate.” Every candidate is persisted with its confidence score, the matching strategy that produced it, and the fields that agreed vs. disagreed.

The same VMS ID ↔ ATS placement pair was confirmed in a previous reconciliation. Near-instant, highest confidence.

Typical: 98–100%Exact match on one or more of: external ID, placement number, candidate email, SSN last-4 combined with name.

Typical: 95–99%Normalized-name similarity, rate within tolerance, overlapping start/end dates, same client — weighted into a score.

Typical: 70–94%Structured LLM compare over name, client, role, rate, dates. Emits a confidence score and a reasoning pill for every match.

Typical: 60–90%Once a candidate has a winning strategy and confidence score, it’s routed automatically. The cutoffs are configurable per tenant in Agent Settings, but the defaults mirror what experienced middle-office analysts already do by hand.

When a human reviews a match, the row expands to show the VMS record on one side and the ATS placement on the other, field-by-field, with agreement indicators on each field. Above the compare sits the AI reasoning pill explaining why the agent scored the match the way it did. One click to approve. One click to reject. One click to request a re-match with different fields.

Everything you do here writes to the audit log: actor, timestamp, decision, and the match candidate that was accepted or rejected. If a re-match is requested, the new candidate shows up in the queue immediately.

Currently in Beta on the Transform tier. Every tenant starts in dry-run for the first full reconciliation batch.